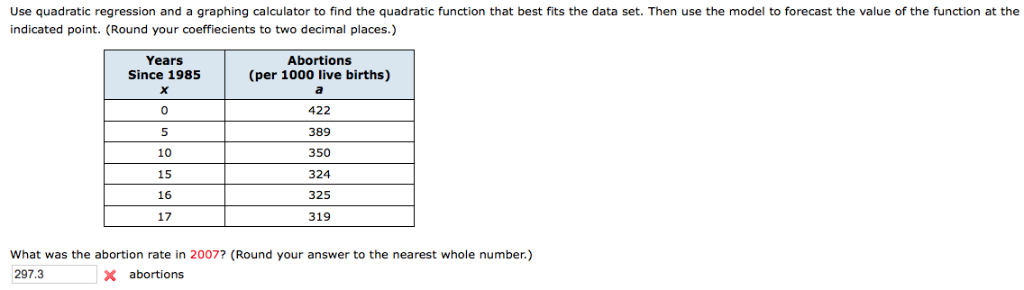

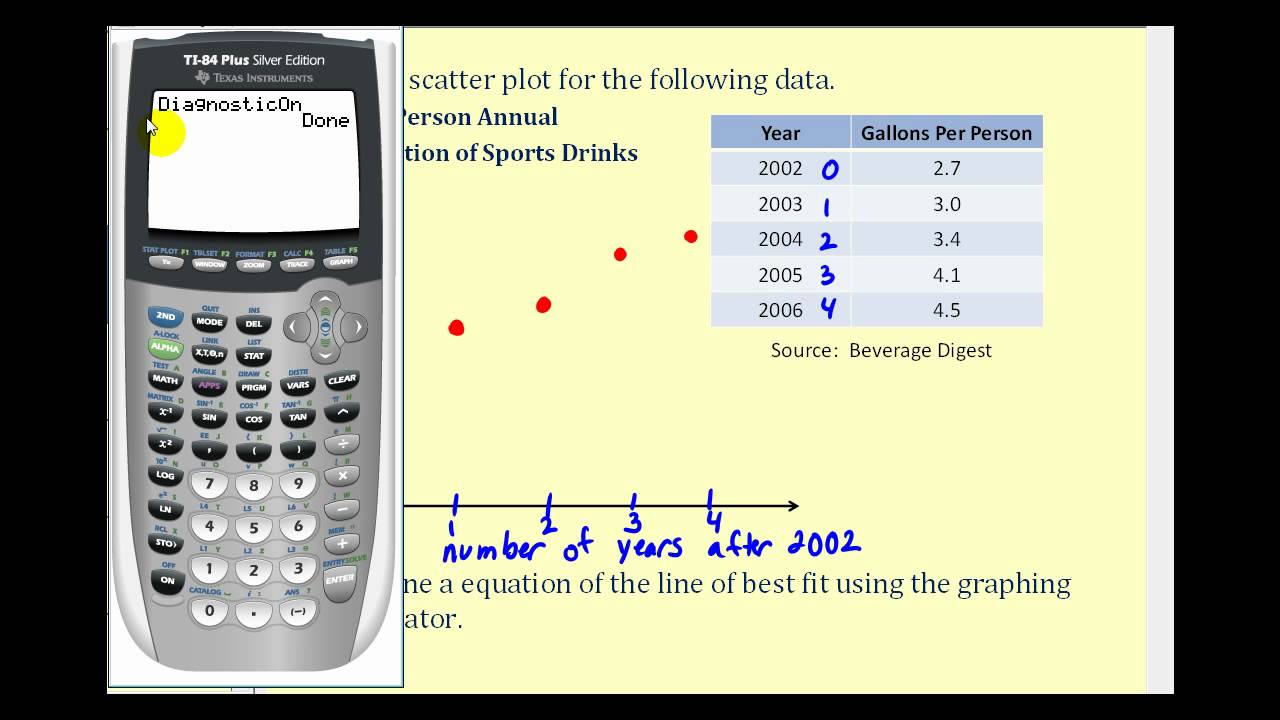

And what this will do is it'll map on d's most my best quadratic line giving my A. I'm gonna be this similar kind of till day X. So I'm gonna do y sub one instead of equals. This Quadratic Regression Calculator quickly and simply calculates the equation of the quadratic regression function and the associated correlation. Where A B and C are constants but in my table I have Y sub one and exit one. The equation has the form: y ax2 + bx + c, where a 0. The result is a regression equation that can be used to make predictions about the data. QUADRATIC REGRESSION CALCULATOR PLUSOkay so how am I going to do this? Well the what's the standard form of a quadratic equation? Y equals X squared plus bx plus C. Quadratic regression is a way to model a relationship between two sets of variables. Least square method can be used to find out the Quadratic Regression Equation. I see that they're graft on dez moses and I'm trying to find a line a quadratic expression that matches this. The calculator factors an input polynomial. So once I enter all these graphs 16 and so on and so forth. Right in order to 0 -1 1 -3 and so on and so forth. What I'm going to do is just add a table and write my ordered pairs in the table. If residuals have unequal variance, a weighted least squares estimator may be used to account for that.Suppose I'm given a list of ordered pairs and I'm gonna use my dez most graphing calculator to calculate this. An advantage of traditional polynomial regression is that the inferential framework of multiple regression can be used (this also holds when using other families of basis functions such as splines).Ī final alternative is to use kernelized models such as support vector regression with a polynomial kernel. Some of these methods make use of a localized form of classical polynomial regression.

Therefore, non-parametric regression approaches such as smoothing can be useful alternatives to polynomial regression. This is similar to the goal of nonparametric regression, which aims to capture non-linear regression relationships. Find step-by-step Algebra solutions and your answer to the following textbook question: When a student uses quadratic regression on a graphing calculator to. Quadratic regression calculator to find coefficients for y ax2 + bx + c, multiple linear regression and least-squares best fit quadratic polynomial. The goal of polynomial regression is to model a non-linear relationship between the independent and dependent variables (technically, between the independent variable and the conditional mean of the dependent variable).

These families of basis functions offer a more parsimonious fit for many types of data. Added by LathropHeartland in Widget Gallery. In modern statistics, polynomial basis-functions are used along with new basis functions, such as splines, radial basis functions, and wavelets. Use this widget to fit your data to a variety of regression models. A drawback of polynomial bases is that the basis functions are "non-local", meaning that the fitted value of y at a given value x = x 0 depends strongly on data values with x far from x 0. The goal of regression analysis is to model the expected value of a dependent variable y in terms of the value of an independent variable (or vector of independent variables) x. Quadratic Formula Calculator The calculator below solves the quadratic equation of ax 2 + bx + c 0. The confidence band is a 95% simultaneous confidence band constructed using the Scheffé approach. Definition and example Ī cubic polynomial regression fit to a simulated data set. More recently, the use of polynomial models has been complemented by other methods, with non-polynomial models having advantages for some classes of problems. In the twentieth century, polynomial regression played an important role in the development of regression analysis, with a greater emphasis on issues of design and inference. The first design of an experiment for polynomial regression appeared in an 1815 paper of Gergonne. The least-squares method was published in 1805 by Legendre and in 1809 by Gauss. The least-squares method minimizes the variance of the unbiased estimators of the coefficients, under the conditions of the Gauss–Markov theorem. Polynomial regression models are usually fit using the method of least squares.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed